If you have been following the AI space then you must have come across terms like GPU and TPU. These chips have become quite important for training and running AI models. While both serve similar purposes, they differ in architecture, performance scaling, ecosystem support, and deployment flexibility. That’s why in this guide I have decided to talk about both the chips. So without wasting anymore time. Let’s get started.

GPU Architecture at a Glance

A GPU has thousands of smaller cores designed to handle parallel operations. Each core is less powerful than a CPU core, but the sheer number allows GPUs to run many calculations simultaneously. This parallel approach works well for matrix operations, which are fundamental to neural networks.

NVIDIA dominates the GPU market for AI. The latest flagship is the Blackwell Ultra GPU, known as the B300. It contains 208 billion transistors and delivers 15 petaflops of FP4 compute performance.

Each B300 GPU includes 288 GB of HBM3e memory with 8 terabytes per second of bandwidth. The memory capacity matters because larger models need space to load their weights and intermediate values.

Popular GPU Options in 2026

The NVIDIA H100 remains common for training large language models. The newer B200 and B300 are seeing rapid adoption. A single GB300 NVL72 rack integrates 72 B300 GPUs with 36 NVIDIA Grace CPUs and delivers 1.1 exaflops of FP4 inference performance.

The GB300 NVL72 also enables 50 times higher output for AI factories compared to Hopper-based systems. That matters if you’re running production services at scale.

What Are TPUs? Understanding Tensor Processing Units

TPUs are custom chips built by Google for tensor operations. Google introduced the first TPU internally in 2015 and publicly showed the technology in 2016. Unlike GPUs, which are general-purpose accelerators, TPUs use specialized architecture optimized for AI workloads.

TPU Architecture – Systolic Arrays & Specialized Design

TPUs use systolic arrays, a specialized arrangement of processors that multiply matrices. This approach reduces data movement and power consumption compared to general-purpose architectures.

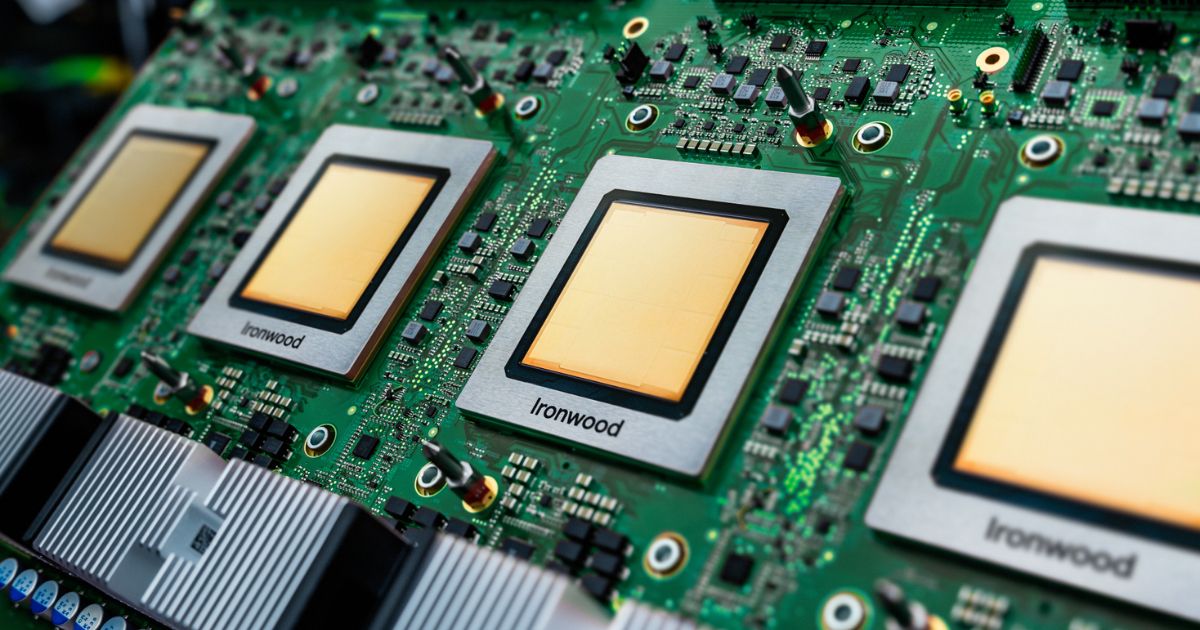

The latest generation is Ironwood, Google’s seventh-generation TPU. It delivers 4,614 FP8 TFLOPS of peak performance and includes 192 GB of HBM3e memory with 7.4 terabytes per second of bandwidth.

Ironwood can scale to 9,216 chips in a superpod, delivering 42.5 exaflops of total compute power. Each chip connects via high-speed Inter-Chip Interconnect operating at 9.6 terabits per second, enabling efficient communication across thousands of processors.

TPU Generations 2026 Overview

Google introduced Trillium (TPU v6) in 2024 with 30 petaflops of dense compute. Ironwood (TPU v7) came later in 2025 and offers more than 4 times the performance per chip compared to Trillium. While the Ironwood excels in inference, it also supports training. Anthropic plans to deploy one million Ironwood TPUs to power Claude models.

GPUs vs TPUs Performance Metrics

Raw performance numbers tell part of the story. Real-world application needs looking at several other factors.

Raw Computational Performance

The B300 delivers up to 15 petaflops of dense FP4 inference performance per GPU. At rack scale, the GB300 NVL72 achieves 1.1 exaflops of FP4 compute.

Ironwood delivers 4,614 FP8 TFLOPS per chip. In a full superpod with 9,216 chips, that scales to 42.5 exaflops of total compute.

These numbers sound impressive, but they represent peak theoretical performance. Real workloads don’t always reach these peaks due to bottlenecks in data movement and memory access.

Energy Efficiency, Operations Per Watt

TPUs pull ahead here. They convert more computational work per watt of power compared to GPUs. The B300 consumes 1,400 watts per chip. An Ironwood superpod with 9,216 chips operates at lower power density per unit of compute.

Google states Ironwood delivers 4 times better per-chip efficiency for both training and inference compared to Trillium. This means lower electricity bills and less heat to manage in data centers. For operations teams, energy efficiency translates directly to reduced running costs and smaller facility requirements.

Training Speed and Scalability

Both architectures scale to thousands of chips. GPUs use NVLink and NVSwitch for chip-to-chip communication. TPUs use Inter-Chip Interconnect with optical switching at massive scales.

The GB300 NVL72 rack connects 72 GPUs with 1.8 terabytes per second of NVLink bandwidth per GPU. The Ironwood superpod interconnects 9,216 chips with 9.6 terabit-per-second optical switching.

Inference Latency and Throughput

Ironwood was purpose-built for inference. Google designed it specifically for “the age of inference” where models need extensive computation during serving.

The GB300 NVL72 delivers real-time video generation improvements. Generating a 5-second video sequence through NVIDIA Cosmos takes nearly 90 seconds on Hopper GPUs. The Blackwell Ultra platform enables real-time generation with 30 times performance improvement.

For inference workloads serving thousands of concurrent users, latency and throughput both matter. Different workloads have different bottlenecks.

Memory Bandwidth

The B300 provides 8 terabytes per second of memory bandwidth supporting 288 GB of HBM3e memory per GPU.

Ironwood offers 7.4 terabytes per second supporting 192 GB of HBM3e memory per chip. This is slightly lower peak bandwidth, but the memory capacity per chip in Ironwood enables larger model partitions to fit locally.

TPUs vs GPUs at Scale

Total cost of ownership includes hardware, power, cooling, networking, and operational overhead.

Total Cost of Ownership (TCO) Breakdown

GPU costs appear straightforward until you total everything. NVIDIA B200 GPUs cost between $45,000 and $50,000 each. Complete 8-GPU server systems exceed $500,000 before networking and infrastructure.

GB300 systems require liquid cooling, 800-gigabit networking, and specialized infrastructure. The infrastructure gap is larger than previous generations. Organizations deploying Blackwell need immediate facility upgrades.

TPUs through Google Cloud use a rental model primarily. Pricing varies by workload and region, but Google reports 44 percent lower total cost of ownership per Ironwood chip compared to GB200 servers when accounting for the full system cost.

The advantage widens at massive scale. Organizations buying thousands of chips see better pricing on TPU infrastructure.

Price Per Performance Analysis

Comparing $/FLOP directly is misleading because sustained performance differs from peak performance. Nvidia’s published numbers represent peak theoretical performance. Real utilization runs lower due to power and thermal constraints.

TPUs tend to achieve higher model FLOP utilization in practice. This means the effective compute per dollar often favors TPUs for large-scale deployments. For smaller operations, GPUs often cost less upfront because you can buy individual units and use existing cloud infrastructure.

Long-Term Cost Considerations

Hardware depreciates quickly. Each GPU generation lasts about 18 months before the next generation launches. Buying equipment that will be outdated in 2 years is expensive compared to renting.

Cloud pricing changes with demand and competition. Right now both Google Cloud and cloud GPU providers aggressively compete for large customers. This competition keeps prices reasonable.

Migration costs matter. Moving trained models from one platform to another requires engineering work. Switching from CUDA to TPU involves rewriting optimized kernels.

Ecosystem and Software Compatibility

This is where GPUs win decisively for development. The CUDA ecosystem is enormous.

GPU Ecosystem Dominance

CUDA remains the standard framework for GPU computing. PyTorch, TensorFlow, and nearly every AI framework run optimized on CUDA.

NVIDIA provides TensorRT-LLM for inference optimization. The community has written millions of CUDA kernels. Most open-source AI projects target GPUs first because the developer audience is largest.

If your team knows PyTorch and CUDA, GPUs let you move faster. You can find examples, tutorials, and libraries for almost any AI task. The downside is lock-in. You’re committed to the NVIDIA platform and pricing.

TPU Software Stack

TPUs integrate tightly with TensorFlow and JAX. Google’s XLA compiler optimizes code for TPU hardware. PyTorch support is growing through PyTorch/XLA but remains less mature than GPU support.

Google built Pathways, its ML runtime for distributed computing. It enables efficient work across thousands of TPU chips. Fewer open-source projects target TPUs. If your model wasn’t designed for TPU, porting requires work. You can’t just drop a GPU model onto a TPU and expect good performance.

Portability & Migration Challenges

Moving code between platforms hurts. A model optimized for CUDA won’t automatically perform well on TPU. You’ll need to rewrite some parts.

If multi-vendor flexibility matters, GPUs win. You can switch between NVIDIA, AMD, and others. TPUs lock you into Google Cloud. Hybrid strategies exist. Some teams use GPUs for development and TPUs for large-scale serving. This adds complexity.

Technical Specifications

Performance in Real-World Scenarios

Google’s Internal Workloads

Google uses Ironwood for Gemini model serving. YouTube recommendations run on TPUs. Gmail, Search, and other services leverage TPU inference. This is production validation at scale. Google has spent years optimizing Ironwood specifically for these workloads.

Enterprise AI Infrastructure

Major cloud providers are diversifying. Microsoft Azure uses both NVIDIA GPUs and custom hardware. AWS is developing Trainium and Inferentia chips.

Meta runs a mix of GPUs and custom silicon. The trend is clear: massive operations use multiple accelerator types. Hybrid deployments are common. Teams use GPUs for training and TPUs for inference, or vice versa.

Making Your Hardware Choice

There’s no universal winner. The choice depends on your specific situation.

Choose GPUs if you value ecosystem breadth, rapid development, and flexibility. They’re the safer choice for most organizations.

Choose TPUs if you’re running massive inference operations, can commit to Google Cloud, and want lower costs at scale. Ironwood was purpose-built for this use case.

The margin between them is narrowing. Both are excellent hardware. The difference is engineering philosophy and business constraints.

Benchmark on your specific workload before committing. Run your actual models and measure real performance and costs. Published specifications don’t capture everything that matters in production.

FAQs

Neither GPU nor TPU is universally better. GPUs excel in flexibility and broad ecosystem support for diverse AI tasks, while TPUs shine in efficiency for large-scale tensor operations on Google Cloud.

No, Google TPU is not a GPU. TPUs are custom ASICs designed specifically for tensor computations, unlike general-purpose parallel processors like GPUs.

Yes, TPUs generally deliver better performance per watt for AI workloads. Ironwood TPUs achieve 2-4x higher efficiency than comparable GPUs like Blackwell in optimized inference tasks.